Enterprises are deploying AI agents faster than they can govern them. According to OutSystems' 2026 State of AI Development report, 97% of organizations are exploring agentic AI — but only 36% have a centralized governance approach, and just 12% use a central platform to control AI sprawl. This guide explains what AI automation governance actually means in 2026, the seven control layers every enterprise needs, and how to embed them directly into your automation builder instead of bolting them on after deployment.

Why AI automation governance is the defining IT challenge of 2026

Agentic AI does not wait for a prompt. It reasons, plans, and acts — often across systems it was never explicitly granted access to. That shift has created a gap that most IT leaders now recognize but few have closed: adoption is running ahead of oversight.

The numbers tell the story. OutSystems' 2026 survey of 1,879 IT leaders found that 73% express high or moderate trust in agents acting autonomously, up from 40% the year before. Deloitte's 2026 report, cited by Galileo, found that only 20% of organizations have mature governance models. IBM's 2026 goals for AI leaders put it plainly: the companies that win the agentic pivot will be the ones who treat governance not as a compliance checkbox but as an operational discipline.

This is especially acute for IT teams. Unlike marketing or sales, IT automations touch production systems, identity infrastructure, sensitive user data, and revenue-critical workflows. A hallucinated refund is embarrassing. A hallucinated password reset that locks out the CFO on a Monday morning is a board-level incident.

So the question for IT leaders in 2026 is not whether to deploy AI agents. It is: how do we deploy them in a way our auditors, our CISO, and our board can actually trust?

What "AI automation governance" actually means

AI automation governance is the set of policies, controls, observability systems, and enforcement mechanisms that ensure an AI agent behaves within defined boundaries — from the moment it is built to every runtime decision it makes in production.

It is not the same as traditional IT governance. Traditional controls were designed for deterministic systems: if-this-then-that logic that behaves identically every time. AI agents are non-deterministic. They learn, adapt, and make judgment calls. That means governance has to move from preventing bad code from shipping to constraining behavior in real time.

There are three layers worth separating clearly:

- Design-time governance — who can build agents, what data they can use, what actions they can take.

- Runtime governance — how the agent behaves in production, what guardrails intercept unsafe outputs, how decisions are logged.

- Lifecycle governance — how agents are versioned, retired, monitored for drift, and continuously evaluated.

Most enterprises have some version of layer one. Very few have all three working together.

The 7 control layers every enterprise AI automation needs

Drawing on frameworks from IBM, the Cloud Security Alliance, and AI governance research from 2025–2026, here is the practical checklist IT leaders should apply to any AI automation — whether it's a ticket deflection agent, a password reset workflow, or a change management approval bot.

1. Identity and access controls

Every agent needs a verifiable identity, scoped permissions, and least-privilege defaults. Frame the agent as a digital employee, not a tool — it gets onboarded, credentialed, and offboarded the same way a contractor would. ISACA's 2026 guidance goes further: embed agents into your Zero Trust architecture rather than bolting them onto the edge of it.

2. Data governance and PII protection

Agents should never receive data they don't need, and they should never surface data the requester isn't entitled to see. This means tokenization of sensitive fields before they reach the model, PII redaction on both inputs and outputs, and enforced DLP policies at the prompt layer — not just at the storage layer.

3. Action guardrails and approval workflows

Not every action should be autonomous. High-risk actions (disabling accounts, modifying production config, processing refunds above a threshold) should route through human-in-the-loop approvals. Low-risk, high-volume actions (password resets, status updates, knowledge lookups) can run autonomously once validated. The governance framework defines which bucket each action falls into.

4. Runtime guardrails for unsafe outputs

Modern guardrails platforms intercept unsafe inputs and outputs in real time — typically under 200 milliseconds — and block prompt injections, hallucinated claims, and policy violations before they reach users. CSA's December 2025 guidance describes prompt guardrails as the control point between human intent and machine interpretation, and treats them as non-negotiable for any enterprise GenAI deployment.

5. Audit trails and decision traceability

If an agent denies a user's access request, a compliance officer needs to reconstruct why six months later. That means logging the input, the reasoning trace, the tools invoked, the outputs generated, and the final action — in a format that survives model updates and supports regulatory review. IBM's 2026 framework calls this "embedding accountability into the process" rather than auditing after the fact.

6. Continuous evaluation and drift detection

Agents degrade. Prompts that worked in January hallucinate in June. Governance requires pre-production stress tests for jailbreaks and prompt injection, runtime monitoring for accuracy and drift, and automated re-evaluation when underlying models are updated. This is the shift from point-in-time certification to continuous compliance.

7. Compliance alignment

ISO 42001, the EU AI Act, NIST AI RMF, SOC 2, HIPAA, and GDPR all now have explicit or implicit AI requirements. Governance frameworks need to map every control back to the specific regulation it satisfies, so that audit evidence is generated automatically rather than assembled under duress.

Where most governance efforts break down

The common failure pattern looks like this: governance lives in one place (a policy document, a CISO-owned review board, a separate AI review committee), while agent building lives somewhere else entirely (a no-code platform, a developer's local environment, a business-unit automation team).

The result is exactly the gap OutSystems measured: awareness without enforcement. Policies exist, but they are not embedded into the tools people use to build agents, so they get bypassed — usually unintentionally — every time someone needs to ship something quickly.

Forward-looking enterprises are closing this gap by embedding governance mechanisms directly into the automation layer itself. Controls are not reviewed after the agent is built. They are the environment the agent is built in. Every workflow automatically generates documentation. Every sensitive action is surfaced for review. Every audit question has a pre-built answer.

This shift — from governance as a gate to governance as infrastructure — is what separates the 36% who have centralized AI governance from the 64% who do not.

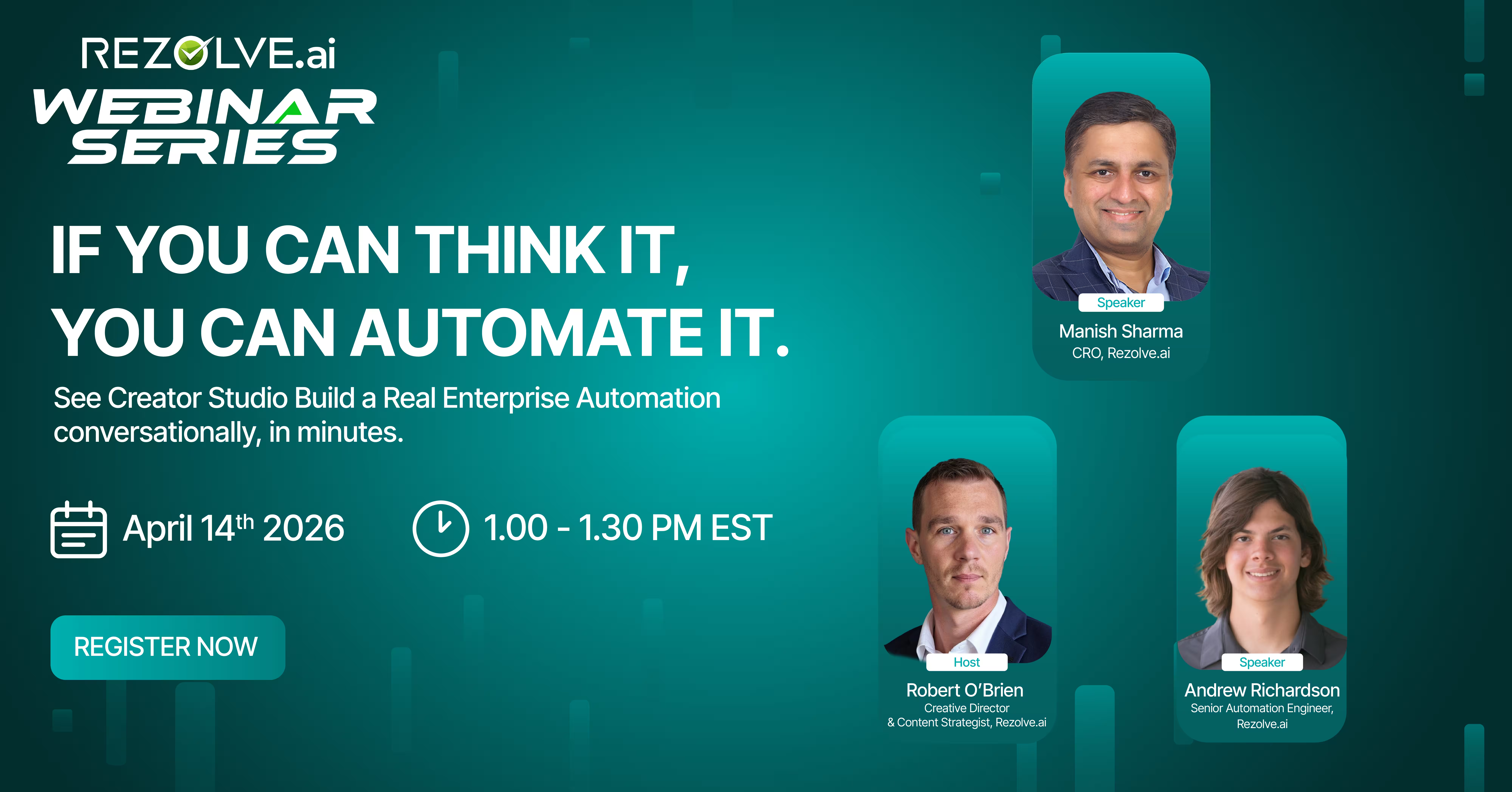

How Rezolve Creator Studio embeds governance into no-code AI automation

Rezolve Creator Studio is the no-code automation builder inside the Rezolve.ai Agentic Service Management platform. It is designed for IT teams who need to ship AI automations quickly but cannot afford to compromise on the seven control layers above. Governance is not a separate module — it is built into the fabric of how automations are designed, tested, and deployed.

See Rezolve Creator Studio in action:

Here is how the seven controls map to what teams get in Rezolve Creator Studio:

- Identity and access. Role-based access control defines who can build, approve, and deploy automations. Every agent inherits scoped permissions from your existing identity provider.

- Data governance. Sensitive fields are masked by default. PII handling policies are enforced at the workflow layer, not left to individual builders to remember.

- Action guardrails. Every workflow supports configurable approval steps. High-risk actions route through human reviewers automatically, while low-risk actions run autonomously with full logging.

- Runtime guardrails. The platform's guardrails layer intercepts unsafe outputs, blocks prompt injection attempts, and prevents hallucinated responses from reaching end users.

- Audit trails. Every automation generates a complete decision trace — inputs, tool calls, reasoning, outputs, and final actions — queryable by compliance and IT ops alike.

- Continuous evaluation. Automations can be tested against scenario libraries before deployment and monitored for drift in production.

- Compliance alignment. The underlying Rezolve.ai platform is built to support enterprise compliance requirements, with documentation generated automatically as workflows are built.

The design principle is simple: if IT teams have to choose between shipping fast and governing properly, governance loses. So Rezolve Creator Studio makes the governed path the default path.

A practical framework: the 4-question governance test

Before deploying any AI automation — whether you use Rezolve Creator Studio or another platform — run it through this four-question test. If you cannot answer all four clearly, the automation is not ready for production.

1. What exactly can this agent do, and what is it forbidden from doing? If the answer involves hand-waving about "the model will figure it out," you have a scope problem. Define the action space explicitly.

2. What data does it see, and what does it expose? Trace every input and every output. Confirm that sensitive fields are masked, that outputs cannot leak data across user boundaries, and that PII handling matches your data classification policy.

3. How will we know when it goes wrong — and who gets paged? Define the observability layer before you ship. Drift, hallucination rate, and policy violations should all be monitored continuously, with clear ownership for response.

4. If a regulator asked us to explain a decision this agent made six months ago, could we? If the answer requires reconstructing logs from three different systems and hoping they align, your audit trail is not good enough. The decision record should be queryable in one place, in plain language.

The bottom line: trust is the moat

The enterprises that will scale AI automation fastest in 2026 are not the ones with the most aggressive adoption targets. They are the ones whose governance frameworks let their IT teams say yes to automation requests with confidence — because every automation they ship is auditable, observable, and bounded by design.

Governance is not a tax on innovation. It is what makes innovation sustainable at enterprise scale. The goal is not to slow your teams down. It is to build an environment where the fast path and the safe path are the same path.

That is the standard Rezolve Creator Studio is built to meet — and it is the standard every IT leader should hold their AI automation stack to in 2026.

Ready to see governed AI automation in action? Book a demo of Rezolve Creator Studio →

See how IT teams are shipping AI automations in days instead of quarters — without compromising on audit, compliance, or control.

FAQ

1. What is AI automation governance?

A. AI automation governance is the framework of policies, controls, guardrails, and audit mechanisms that ensures AI agents behave within defined boundaries throughout their lifecycle — from design to deployment to retirement. It covers identity, data handling, action permissions, runtime safety, audit trails, continuous evaluation, and regulatory compliance.

2. How is AI governance different from traditional IT governance?

A. Traditional IT governance was designed for deterministic systems that behave the same way every time. AI agents are non-deterministic — they learn, adapt, and make judgment calls. This means governance has to move from preventing bad code from shipping to constraining agent behavior in real time, with continuous monitoring for drift.

3. What are AI guardrails?

A. AI guardrails are technical and procedural controls that set boundaries on AI system behavior. They intercept unsafe inputs and outputs in real time, block prompt injections, prevent data leakage, redact PII, and enforce organizational policies — typically in under 200 milliseconds per request.

4. What percentage of enterprises have centralized AI governance?

A. According to OutSystems' 2026 State of AI Development report, only 36% of organizations have a centralized approach to agentic AI governance, and just 12% use a centralized platform to maintain control over AI sprawl — even though 97% are actively exploring agentic AI strategies.

5. Does adding governance slow down AI deployment?

A. Counterintuitively, no. When governance is embedded into the automation layer itself — rather than bolted on as a separate review gate — it reduces the manual oversight required per automation, accelerates approval cycles, and lets IT teams ship faster with higher confidence. The slow path is ungoverned automation that gets blocked during security review.

6. How does Rezolve Creator Studio handle AI governance?

A. Rezolve Creator Studio embeds governance directly into the no-code automation builder. Role-based access controls, approval workflows, runtime guardrails, audit trails, and continuous evaluation are built into the platform rather than added on top. This means every automation built in Rezolve Creator Studio is governed by default.

.png)

.webp)

.png)

.webp)