Agentic AI is transforming how enterprises operate — not just in IT, but across marketing, product development, customer service, and operations. The benefits are enormous, but real risks exist: data leakage to LLM training, prompt manipulation, shadow AI sprawl, and agent privilege issues. The good news? These risks are well-understood and solvable. This article lays out the key concerns, the specific mitigations for each, and a set of best practices for deploying agentic AI safely.

Introduction: The Biggest Question in Enterprise AI Right Now

Agentic AI is everywhere. Whether you're buying a third-party product for your IT service desk, subscribing to AI tools for marketing and legal, building custom applications on top of platforms like Microsoft Copilot, or simply watching your employees sign up for ChatGPT and Gemini subscriptions on their own — agentic AI has already entered your organization.

The question isn't whether to use it. The question is how safe it is and how to use it responsibly.

This is a fair question. When AI can reason, decide, and act autonomously — accessing systems, reading data, executing workflows — the stakes are different from a traditional software deployment. Many IT leaders and CISOs are right to ask: what could go wrong?

The answer, honestly, is that some things can go wrong — in theory. But the benefits are enormous, and the risks are well-mapped and mitigable. What every enterprise needs right now isn't fear — it's a clear framework for understanding the risks and applying the right safeguards.

What Are the Real Concerns?

There are six primary concerns that organizations raise when evaluating agentic AI. Some are well-founded technical risks. Others are common preconceptions that, while understandable, are largely addressable with today's technology. Let's be precise about which is which.

Concern 1: Will My Data Be Used to Train AI Models?

This is the number one concern among enterprise buyers — and it's valid. According to Netskope's 2026 Cloud and Threat Report, nearly 47% of employees using generative AI at work are doing so through personal accounts, creating uncontrolled data flows. A LayerX security report found that 77% of employees have pasted company information into AI tools, with 82% of those using personal rather than enterprise-managed accounts.

The fear is straightforward: if employees paste confidential strategy documents, customer data, or proprietary code into a public AI tool, that data could potentially become part of the model's training set — leaking intellectual property into a global model accessible to anyone.

This is a real risk when using consumer-grade, public AI tools without governance. It is largely mitigable when using enterprise-grade AI platforms.

Concern 2: Prompt Injection and Agent Manipulation

Prompt injection remains the number one risk on the OWASP Top 10 for LLM Applications. In agentic systems, this concern escalates because agents don't just generate text — they take actions. A well-crafted malicious input could, in theory, redirect an agent's behavior.

The OWASP Top 10 for Agentic Applications (released December 2025) identifies Agent Goal Hijack as the top risk — where attackers attempt to redirect an agent's objective through manipulated inputs. This is a genuine technical challenge that the industry is actively addressing.

Concern 3: Tool Misuse and Privilege Escalation

AI agents are given access to tools — APIs, databases, workflow engines. If an agent's scope isn't properly constrained, a compromised or misconfigured agent could potentially use those tools in unintended ways. Industry data suggests that non-human identities (NHIs) outnumber human identities at a 50:1 ratio in enterprises today. AI agents represent a growing category of NHI that needs dedicated security governance.

Concern 4: Shadow AI — The Uncontrolled AI Sprawl

This may be the most practically dangerous concern. If your organization doesn't provide centralized, governed AI services, your employees will find their own solutions. Each department subscribes to different tools. Data flows into unmonitored channels. Security policies can't be enforced because IT doesn't even know the tools exist.

A survey found that over 32% of employees are using AI tools without their employer's knowledge. The average enterprise has an estimated 1,200 unofficial AI applications in use. Shadow AI breaches cost an average of $670,000 more than standard security incidents.

The parallel is instructive: this is the same dynamic that drove shadow IT adoption of cloud storage a decade ago. The lesson then — and now — is that blocking AI doesn't work. Providing secure, centralized AI services is the only sustainable approach.

Concern 5: Cascading Failures in Multi-Agent Systems

A primer on multi agent systems: Read the blog.

In environments where multiple AI agents work together, a single compromised or misconfigured agent can potentially affect downstream decision-making. Research on multi-agent system failures found that in simulated environments, a single poisoned agent could influence downstream decisions across the network within hours.

This is a real concern for organizations deploying complex multi-agent architectures, though the risk scales with the complexity and autonomy of the deployment.

Concern 6: Memory Poisoning and Data Integrity

Agents that maintain context and memory across interactions could, in theory, be manipulated by injecting false information into their memory stores. Once poisoned, the agent makes decisions based on corrupted context. This is identified by the OWASP agentic framework as a distinct risk category.

How Each Concern Can Be Mitigated

The risks above are real but solvable. Here's how each one is addressed with today's technology and enterprise practices.

Mitigation for Data Leakage to LLM Training

Enterprise-grade AI platforms solve this through private endpoints and enterprise-grade APIs. When you use a platform like Rezolve.ai, your data is processed through private, isolated instances. The architecture ensures one-way data flow — the AI model serves your queries, but no learning goes back to the global model. It's essentially a local copy of the LLM that processes your data without incorporating it into future training.

All major LLM providers (OpenAI, Anthropic, Google, Microsoft) now offer enterprise agreements that contractually guarantee your data will not be used for model training. The key is ensuring your organization actually uses the enterprise tier — not the consumer-grade subscription — and that this is enforced centrally rather than left to individual employees.

Additionally, platforms with built-in Data Loss Prevention (DLP) can detect when sensitive information is being shared with the AI and block or flag it before it leaves your environment.

Mitigation for Prompt Injection

Prompt injection cannot be fully eliminated today — language models fundamentally process instructions and data through the same channel. However, the risk can be significantly reduced through multiple layers.

Input validation and sanitization filters catch many known injection patterns before they reach the model. Action-level guardrails ensure that even if an agent's reasoning is compromised, the actions it can take are hard-limited by platform-level controls — not by the prompt itself. Sandboxed execution environments isolate agent actions so that a compromised agent can't access systems beyond its defined scope. Continuous adversarial testing (AI red-teaming) proactively identifies vulnerabilities before they're exploited in production.

The goal isn't eliminating the possibility of prompt injection — it's reducing the blast radius of a successful one so that the impact is contained and detectable.

Mitigation for Tool Misuse and Privilege Escalation

The principle of least privilege applies to AI agents just as it does to human users. Each agent should have its own identity with independently managed access rights. An agent handling password resets doesn't need access to financial systems.

Just-in-time authorization grants elevated permissions only when needed for a specific task, and those permissions expire immediately afterward. Regular access reviews specifically for agent identities — just as you would conduct for human accounts — ensure that scope doesn't creep over time.

Mitigation for Shadow AI

The most effective mitigation for shadow AI isn't blocking — it's providing a better alternative. If you provide your employees with centralized, governed AI services that are easy to use and genuinely helpful, they won't need to go find their own tools.

This means deploying enterprise AI platforms that work where employees already are — in Microsoft Teams, in Slack, via email, on the phone. The AI should be accessible, conversational, and capable. If your centrally provided AI is harder to use than ChatGPT, employees will default to ChatGPT.

On the control side, organizations should implement AI usage policies, monitor for unsanctioned AI tool adoption (just as they monitor for shadow IT), and ensure that data classification frameworks extend to AI interactions.

Mitigation for Cascading Agent Failures

Observability is the key. Multi-agent systems require comprehensive logging of inter-agent communication — what data was passed, what decisions were made, what actions were taken. Without this visibility, diagnosing cascading failures is nearly impossible.

Platforms that provide AI explainability — the ability to inspect each agent's reasoning chain — are essential for environments running multi-agent architectures. If you can see how each agent arrived at each decision, you can trace a cascade back to its source.

Mitigation for Memory Poisoning

Memory integrity checks and periodic context validation can detect when an agent's stored context has been tampered with. Some platforms implement session-scoped memory (context resets between interactions) for sensitive workflows, reducing the window of vulnerability.

Expert Insight

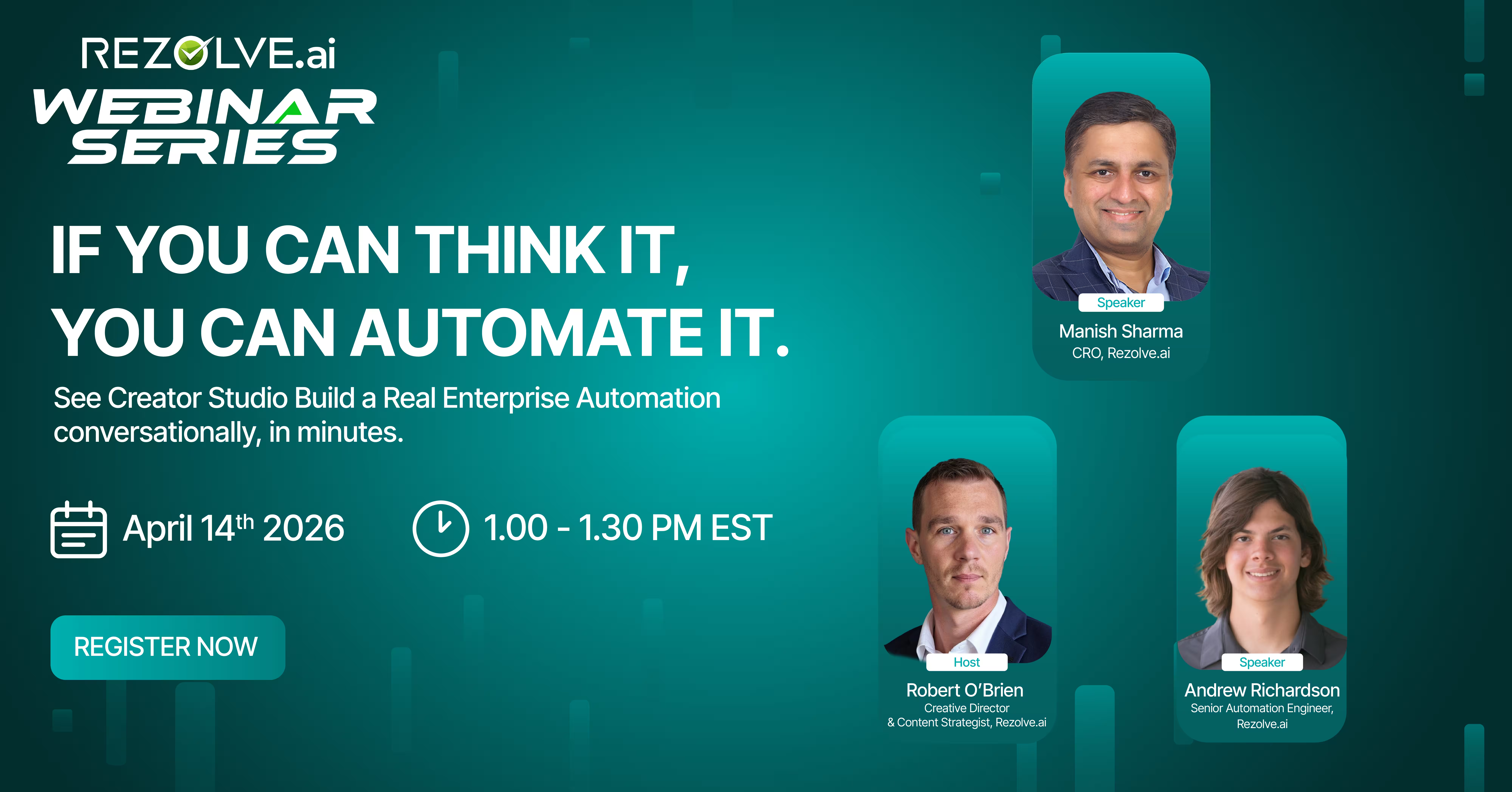

"As you move into the agentic world, security has to be enterprise-grade. Every feature has to be thought through at that level. Many people believe AI is a black box — and many products don't provide a window into how the AI is behaving and making decisions. That's not enterprise-ready. You need explainability. You need to know whether if one LLM fails, the system falls over to another. Most products haven't seen the number of cycles needed to become robust and stable. The nuances of product stability and security in an agentic world — that's what separates enterprise-grade from everything else." — Manish Sharma, CRO, Rezolve.ai

Best Practices: 7 Steps Beyond the Basics

The mitigations above address specific technical risks. These best practices go further — they're organizational and strategic habits that create a culture of safe AI deployment.

1. Treat AI as an Application, Not a Special Category

Don't overthink it. Apply the same governance you'd apply to any enterprise application: access controls, change management, monitoring, compliance checks. The one additional layer for AI is ensuring models aren't being trained on your data — everything else follows established enterprise security practice.

2. Build a Centralized AI Services Strategy

Don't wait for the perfect solution. If you don't provide AI services centrally, every department will solve the problem independently — with different tools, different security postures, and no oversight. Evaluate enterprise AI platforms, deploy them where employees already work, and make them easier to use than the consumer alternatives.

3. Demand Explainability from Every AI Vendor

If your AI vendor can't show you how their agents reason through decisions, you can't audit, govern, or improve the system. Explainability isn't a nice-to-have — it's a requirement for enterprise deployment. Rezolve.ai, for example, provides an explainability feature that reveals the reasoning process of each agent at each step.

4. Implement Multi-LLM Resilience

Don't rely on a single language model. Multi-LLM architecture provides resilience — if one model fails, degrades, or produces unreliable results, the system can route to an alternative. This also provides optionality in choosing the best model for specific tasks.

5. Establish Continuous Adversarial Testing

Static security assessments aren't sufficient for autonomous systems. Integrate adversarial testing into your CI/CD workflows. Any changes to agent configurations, prompts, or model updates should automatically trigger security validation. Monitor agent behavior in production for anomalies.

6. Classify Your Data Before AI Touches It

Not all data needs the same level of protection. Implement data classification tiers and enforce them at the AI interaction layer. Public knowledge base articles can flow freely. Customer PII, proprietary algorithms, and strategic roadmaps require enterprise-grade, private-endpoint AI processing.

7. Choose Platforms with Security Built into the Architecture

The platform you choose matters enormously. Some platforms treat security as an afterthought. Others build it in from the start. When evaluating, look for: SOC 2 Type 2 and ISO 27001 compliance, DLP capabilities within the AI layer, role-based access controls for AI agents, audit logging for every agent action, multi-LLM architecture, and AI explainability.

Rezolve.ai holds SOC 2 compliance and GDPR compliance, offers AI explainability, implements DLP within its AI layer, supports multi-LLM architecture, and provides enterprise-grade security controls through its Agentic Studio.

The Bottom Line: Don't Hesitate — But Deploy Deliberately

The shift to agentic AI is happening whether organizations are ready or not. Competitors are deploying it. Employees are already using unsanctioned tools. Executives are asking how AI is driving efficiency.

The answer isn't caution to the point of paralysis. If you don't provide governed AI services, people will find their own — and you'll lose visibility, control, and data. The risk of not deploying is becoming greater than the risk of deploying.

But deploy with the same rigor you apply to any enterprise system. Understand the specific risks. Apply the specific mitigations. Build organizational habits around safe AI use. And choose platforms where security, explainability, and governance are foundational — not bolted on after the fact.

Done right, agentic AI resolves up to 70% of support tickets autonomously, delivers 24/7 intelligent support, and frees human experts to focus on the work that genuinely requires human judgment.

See how Rezolve.ai approaches enterprise AI governance →

FAQs

1. What is agentic AI and why does it introduce new security considerations?

A. Agentic AI refers to AI systems that can reason, decide, and act autonomously — accessing tools, executing workflows, and making decisions without human intervention at each step. This introduces security considerations because autonomous agents have the ability to take actions (not just generate text), which means a compromised or misconfigured agent can have real operational impact.

2. What is shadow AI and how can organizations address it?

A. Shadow AI is the use of unsanctioned AI tools by employees without IT's knowledge or approval. Research shows the average enterprise has approximately 1,200 unofficial AI applications in use. The most effective solution isn't blocking — it's providing centralized, governed AI services that are easy to use and genuinely helpful, making the shadow tools unnecessary.

3. What compliance certifications should an agentic AI platform have?

A. At minimum, look for SOC 2 Type 2, ISO 27001, and GDPR compliance. For AI-specific governance, ISO/IEC 42001 (AI management systems) is the emerging standard. Platforms should also demonstrate readiness for the EU AI Act (enforced August 2026) and NIST AI Risk Management Framework.

4. What is AI explainability?

A. AI explainability is the ability to see how an AI agent arrived at a specific decision — what data it considered, what options it evaluated, and why it chose a particular path. For enterprise governance, this is essential for auditing, compliance, incident response, and continuous improvement.

5. Should we build or buy agentic AI?

A. For most organizations, buying a purpose-built platform is more practical than building from scratch. Building requires deep expertise in AI model management, security, multi-agent orchestration, and enterprise integration — and you inherit all the maintenance, security patching, and compliance obligations. A platform like Rezolve.ai provides this out of the box with the vendor responsible for ongoing security and compliance.

.png)

.webp)

.png)

.webp)